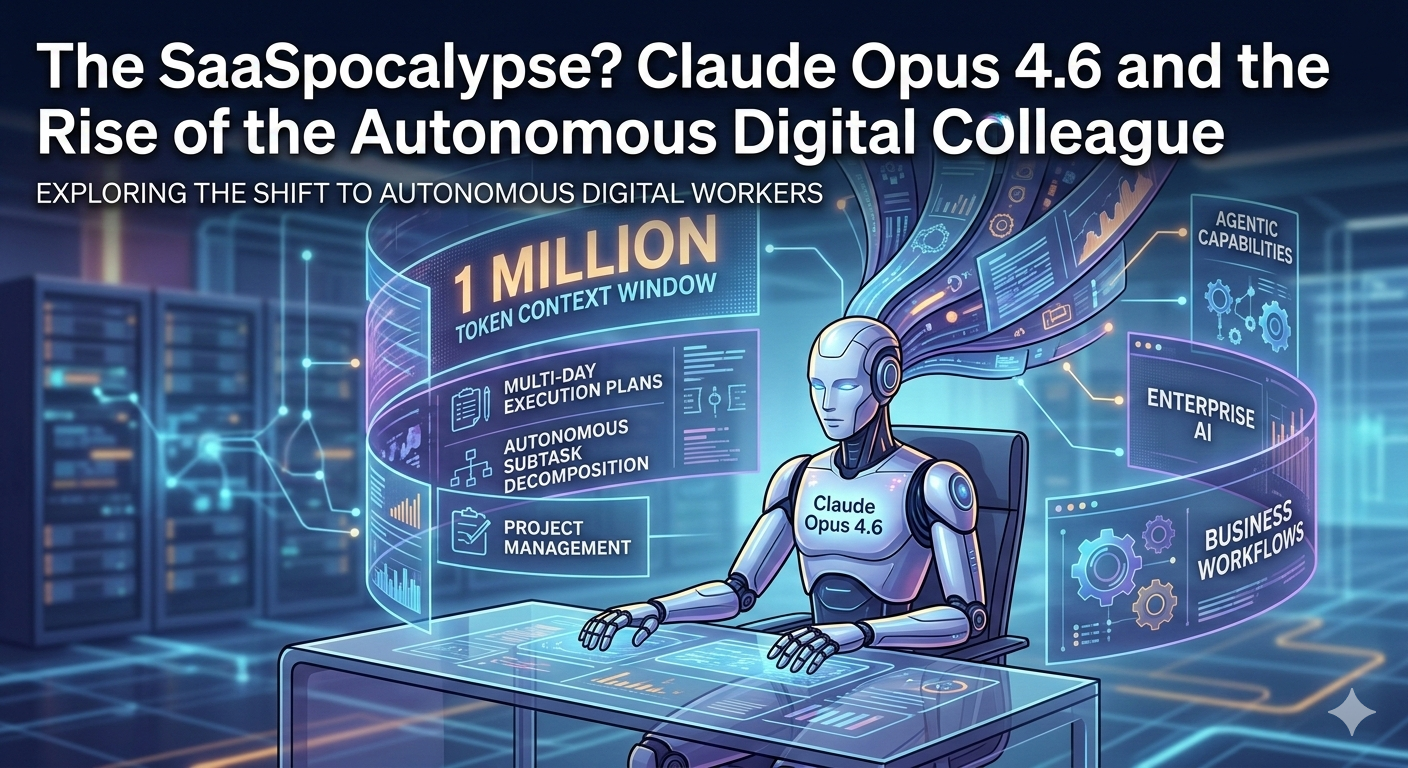

The release of Claude Opus 4.6 on February 5, 2026, marked a turning point in AI history—shifting the focus from “chatting” to “operating.” While the 1 million token window is the headline, the “Agentic” backbone is what makes it a massive leap for professional workflows.

Here is a deep dive into how these features actually work:

🧠 1. The 1 Million Token Window (Beta)

Before this update, AI often suffered from “context rot” or “the needle in the haystack” problem—forgetting details in the middle of a massive file.

-

Scale: 1 million tokens is equivalent to roughly 750,000 words or several entire codebases.

-

Performance: On the MRCR v2 benchmark (a test for information retrieval in long texts), Opus 4.6 scored 76%, compared to only 18.5% for Sonnet 4.5. This means it actually understands the relationships between distant parts of a document rather than just skimming.

-

Adaptive Thinking: This is a new “thinking” layer. Instead of a simple toggle, the model now autonomously decides how much compute to use based on the query’s difficulty. If you ask a complex legal question, it will “think” for longer before answering.

🤖 2. Advanced “Agentic” Capabilities

This is the transition from AI as a “Co-pilot” to AI as an “Autopilot.”

-

Project Decomposition: If you give Opus 4.6 a goal like “Audit this entire legacy Python repository and migrate it to Rust while updating the documentation,” it doesn’t just start typing. It creates a multi-day execution plan, breaks it into subtasks, and works through them.

-

Autonomous Iteration: It can now navigate terminals (scoring 65.4% on Terminal-Bench 2.0) and use computer interfaces (72.7% on OSWorld) to verify its own work. If it writes code that fails a test, it sees the error, investigates the cause, and fixes it without human intervention.

-

Parallel Agent Teams: In a research preview for “Claude Code,” Opus 4.6 can now coordinate multiple “sub-agents.” It functions like a project manager, assigning one sub-agent to write tests while another refactors the logic.

🛠️ 3. New Enterprise Controls

To make these autonomous agents safe for business, Anthropic introduced several “steering” features:

-

Effort Controls: Developers can set four levels of intensity—Low, Medium, High, and Max. This allows you to save money on simple tasks while letting the AI “obsess” over critical financial or security audits.

-

Context Compaction: To prevent the AI from hitting its 1M limit during weeks-long projects, it now uses server-side summarization to “compress” the history of the conversation while keeping the most vital data “fresh.”

-

Computer Use 2.0: With the acquisition of the startup Vercept, Anthropic has improved the model’s ability to interact with legacy software and UIs that don’t have APIs, allowing it to navigate internal HR portals or old banking systems.

💰 Pricing & Availability

-

Pricing: Remains at $15/1M input and $75/1M output tokens for standard use, but premium pricing kicks in for prompts exceeding 200,000 tokens.

-

Where to find it: It is currently live on the Claude Developer Platform, Amazon Bedrock, and GitHub Copilot (as the new flagship model choice).

700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 774 775 776 777 778 779 780 781 782 783 784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 801 802 803 804 805 806 807 808 809 810 811 812 813 814 815 816 817 818 819 820 821 822