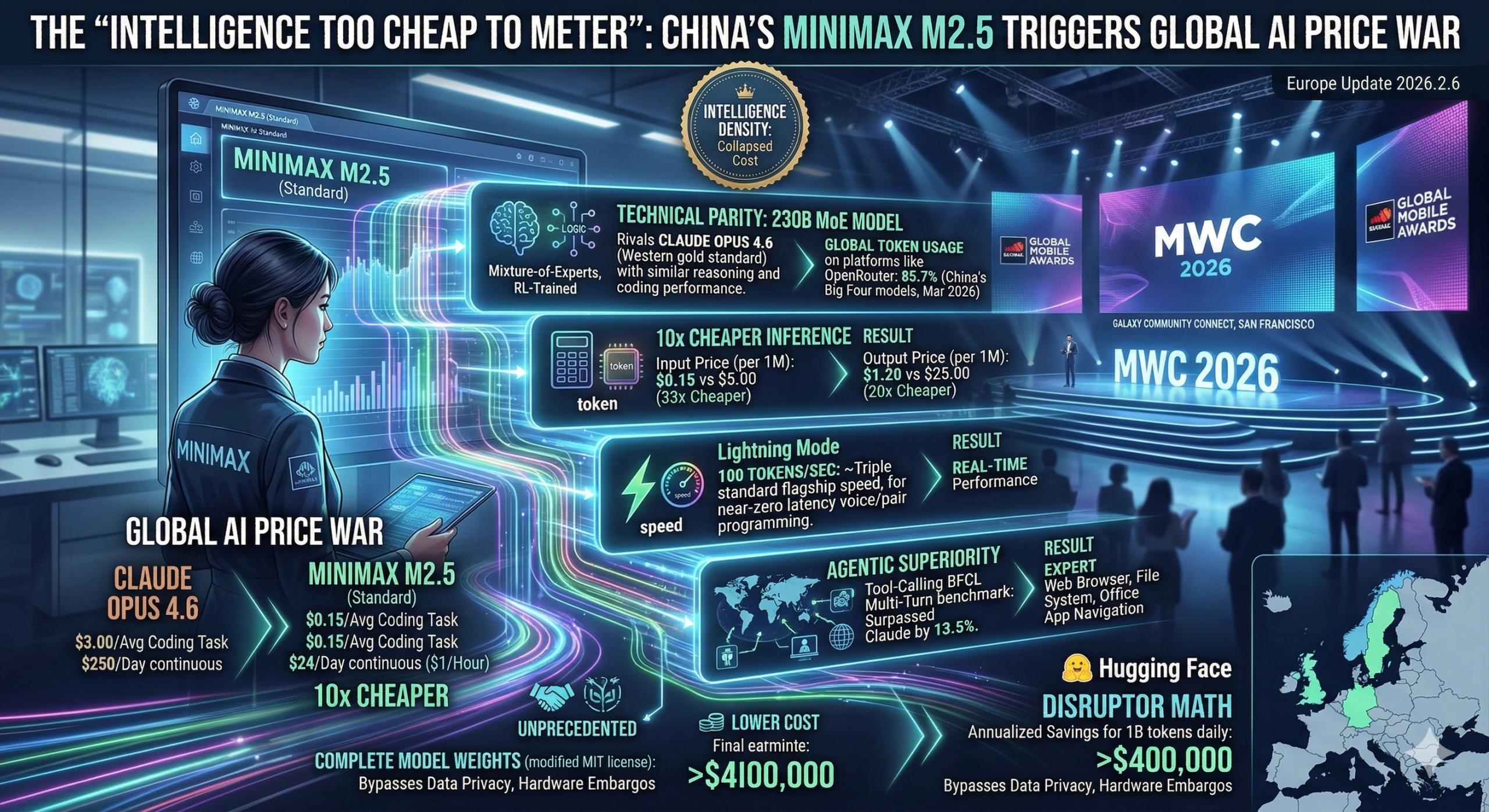

SHANGHAI / HONG KONG — March 7, 2026 — Just weeks after its successful $619 million IPO in Hong Kong, Chinese AI titan MiniMax has sent shockwaves through the global tech industry with the full international release of MiniMax M2.5. The model is the first from a non-Western developer to achieve “technical parity” with the industry’s gold standard, Claude Opus 4.6, while operating at a staggering one-tenth of the cost.

The release has officially triggered what analysts are calling the “First Global AI Price War,” as developers flock to the open-weight model to escape the high margins of Silicon Valley’s proprietary platforms.

Technical Parity: The “Architect” Emerges

MiniMax M2.5 is a 230B-parameter Mixture-of-Experts (MoE) model that distinguishes itself through Reinforcement Learning (RL). Unlike models that simply predict the next word, M2.5 was trained in over 200,000 real-world environments to “think like an architect”—planning system structures and UI wireframes before writing a single line of code.

-

Coding Excellence: On the SWE-bench Verified benchmark (real-world GitHub issue resolution), M2.5 scored 80.2%, trailing Claude Opus 4.6 (80.8%) by a negligible 0.6%.

-

Multilingual Lead: In complex, multi-file coding tasks (Multi-SWE-bench), M2.5 actually surpassed the Western competition with a score of 51.3% vs. Opus’s 50.3%.

-

Agentic Superiority: The model’s most significant lead is in tool-calling. In the BFCL Multi-Turn benchmark, it outperformed Claude by a massive 13.5%, making it the most efficient “Agent” currently available for navigating web browsers and managing file systems.

[Image showing a global heat map of AI token usage on OpenRouter. China’s “Big Four” models (MiniMax, Kimi, GLM, and DeepSeek) now occupy 85.7% of the total usage volume as of March 2026.]

The Math of the Disruptor: 10x Cheaper

The core of the “MiniMax Disruption” is its economic model. By optimizing for “Intelligence Density,” MiniMax has collapsed the cost of high-end inference.

| Metric | Claude Opus 4.6 | MiniMax M2.5 (Standard) | Cost Advantage |

| Input Price (per 1M) | $5.00 | $0.15 | 33x Cheaper |

| Output Price (per 1M) | $25.00 | $1.20 | 20x Cheaper |

| Avg. Cost per Coding Task | ~$3.00 | ~$0.15 | 20x Cheaper |

| Continuous Operation | ~$250/day | ~$24/day ($1/hour) | 10x Cheaper |

“The 0.6% performance gap does not justify a 20x price premium,” says Michele Catasta, a leading AI infrastructure analyst. “For an enterprise processing 1 billion tokens daily, switching to MiniMax represents a monthly saving of over $400,000.”

“Intelligence Too Cheap to Meter”

MiniMax is marketing the model under the slogan “Intelligence too cheap to be metered.” To back this up, they offer a Lightning Mode that delivers 100 tokens per second—roughly triple the speed of current Western flagship models—allowing for near-zero-latency voice conversations and real-time pair programming.

Beyond code, M2.5 has been fine-tuned by professionals in finance, law, and social sciences to produce “deliverable” office outputs. It can natively generate formatted Word documents, complex PowerPoint decks, and Excel financial models with a 59% win rate over mainstream models in head-to-head comparisons.

Geopolitical Implications and Open Weights

Perhaps most significantly for the developer community, MiniMax has released the complete model weights on Hugging Face under a modified MIT license. This allows companies to deploy M2.5 locally, bypassing data privacy concerns and hardware embargos that have previously hampered global collaboration.

700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 774 775 776 777 778 779 780 781 782 783 784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 801 802 803 804 805 806 807 808 809 810 811 812 813 814 815 816 817 818 819 820 821 822