MOUNTAIN VIEW, CA — February 25, 2026 — Google has dramatically upended the landscape of multimodal AI today with the announcement of Gemini 3.1 Pro and its groundbreaking new capability: Agentic Vision.

This feature transforms AI visual understanding from a simple snapshot classification into an active, multi-step investigative process. Previously available as a research preview on Gemini 3 Flash, Agentic Vision is now a core component of Google’s flagship model architecture.

From “Watching” to “Investigating”

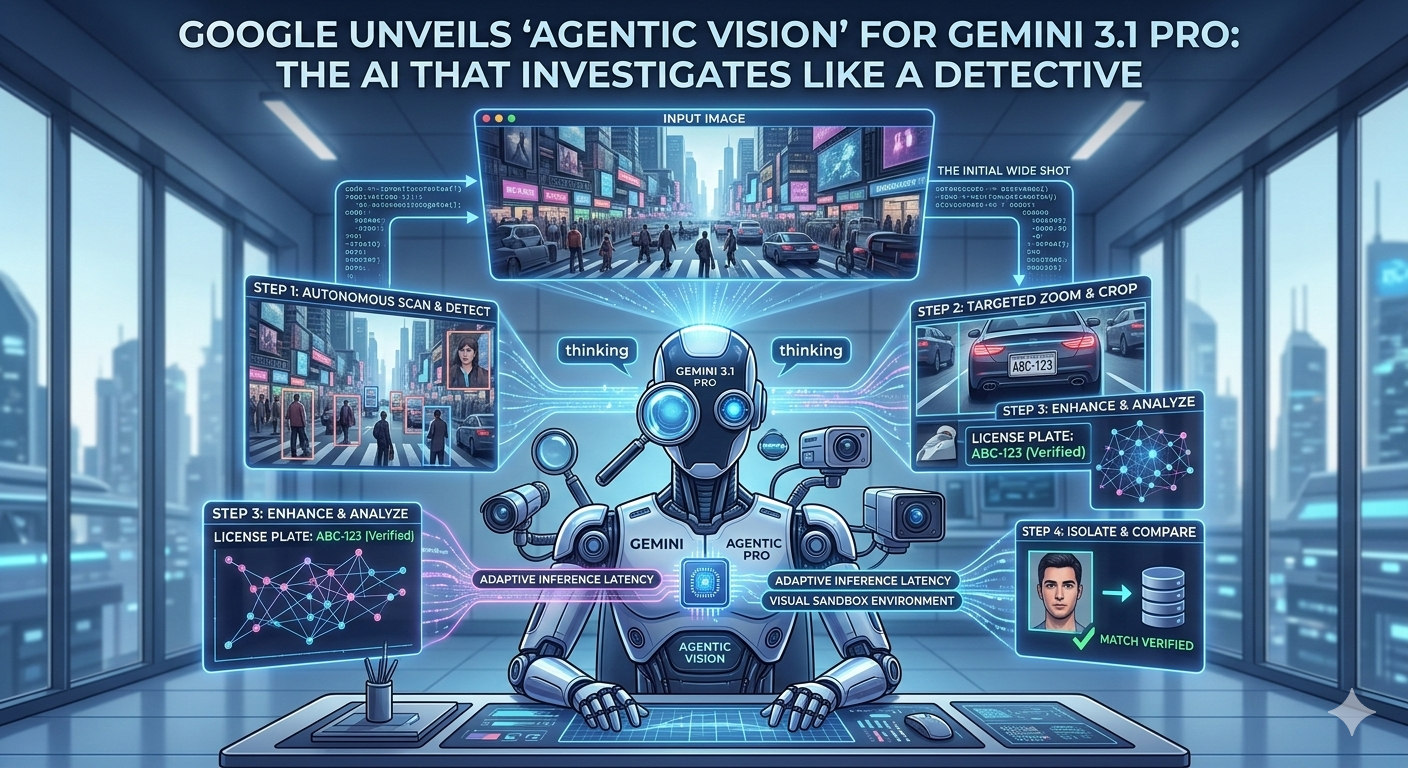

The defining breakthrough of Agentic Vision is its ability to interact with visual data over time, breaking down complex visual queries into sequential “thinking” and “action” steps. While current multimodal models analyze an image once and generate a static response, Gemini 3.1 Pro can manipulate the pixel data recursively.

“We are moving past the ‘first impression’ era of AI vision,” said Dr. Sissie Hsiao, Vice President and General Manager, Gemini experiences. “If you ask a traditional model ‘What is in this parking lot?’, it might list five cars. If you ask Gemini 3.1 Pro, it might first analyze the wide shot, realize several cars are obscured, and then autonomously decide to create five zoomed-in, cropped sub-images of individual license plates to provide an exhaustive, verified count. It doesn’t just look; it investigates.”

The “Investigative Loop” in Action

Google demonstrated several scenarios where Agentic Vision fundamentally changes user workflows:

-

Industrial Inspections: A technician takes a single wide-angle drone photo of a wind turbine. Gemini 3.1 Pro, tasked with a maintenance check, identifies minor surface rust. It then autonomously triggers a macro-zoom operation on that specific pixel coordinate, performs edge detection to measure the crack length, and generates an engineering report—all derived from one initial input.

-

Medical Imaging Triage: When analyzing a 3D MRI volume, the model can navigate through slices, isolate specific organs, and autonomously apply texture analysis to suspicious nodules, simulating the iterative “deep look” a radiologist performs.

-

Document Forensics: Given a photo of a multi-page handwritten contract, Gemini 3.1 Pro can crop paragraph by paragraph, run handwriting translation, and then “flip the page” (if contextual video is available) to verify signatures, creating a detailed audit trail.

Tech Specs: Gemini 3.1 Pro and ‘Adaptive Inference’

This leap is made possible by the unique architecture of the Gemini 3 series. While keeping the efficient Mixture-of-Experts (MoE) design that debuted with Gemini 1.5, Gemini 3.1 Pro introduces two key innovations:

-

Adaptive Inference Latency (AIL): The model no longer operates on a single fixed response time. It autonomously determines how many investigative “hops” (crop, zoom, enhancement steps) are required by the complexity of the query. For a simple request (“Is this a dog?”), it responds instantly. For an investigation (“Audit this aerial map for security risks”), it may take 15–20 seconds to process multiple visual sub-stages.

-

A “Visual Sandbox” Environment: Gemini 3.1 Pro does not alter the user’s original image. Instead, it operates on a sandboxed copy within Google’s compute infrastructure, generating and discarding hundreds of crops and enhanced data points as needed during the investigation.

Agentic Vision Integration with Vertex AI

Google is integrating Agentic Vision deeply into its enterprise cloud ecosystem, Vertex AI. Developers can now utilize the Gemini-3.1-Pro-Agentic API, which allows them to configure specific investigative parameters, such as the maximum number of visual actions allowed or required output validation rules.

“The implication for insurance, security, and agriculture is massive,” said Thomas Kurian, CEO of Google Cloud. “We are providing the infrastructure for systems that don’t just alert a human that something might be wrong, but actively collect and analyze the granular evidence needed for a human to make a confident decision.”

Availability

Gemini 3.1 Pro with Agentic Vision is available starting today in private preview for developers and enterprise customers via Vertex AI and Google AI Studio. A consumer rollout within the Gemini Advanced subscription service is planned for early Q2.

700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 774 775 776 777 778 779 780 781 782 783 784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 801 802 803 804 805 806 807 808 809 810 811 812 813 814 815 816 817 818 819 820 821 822