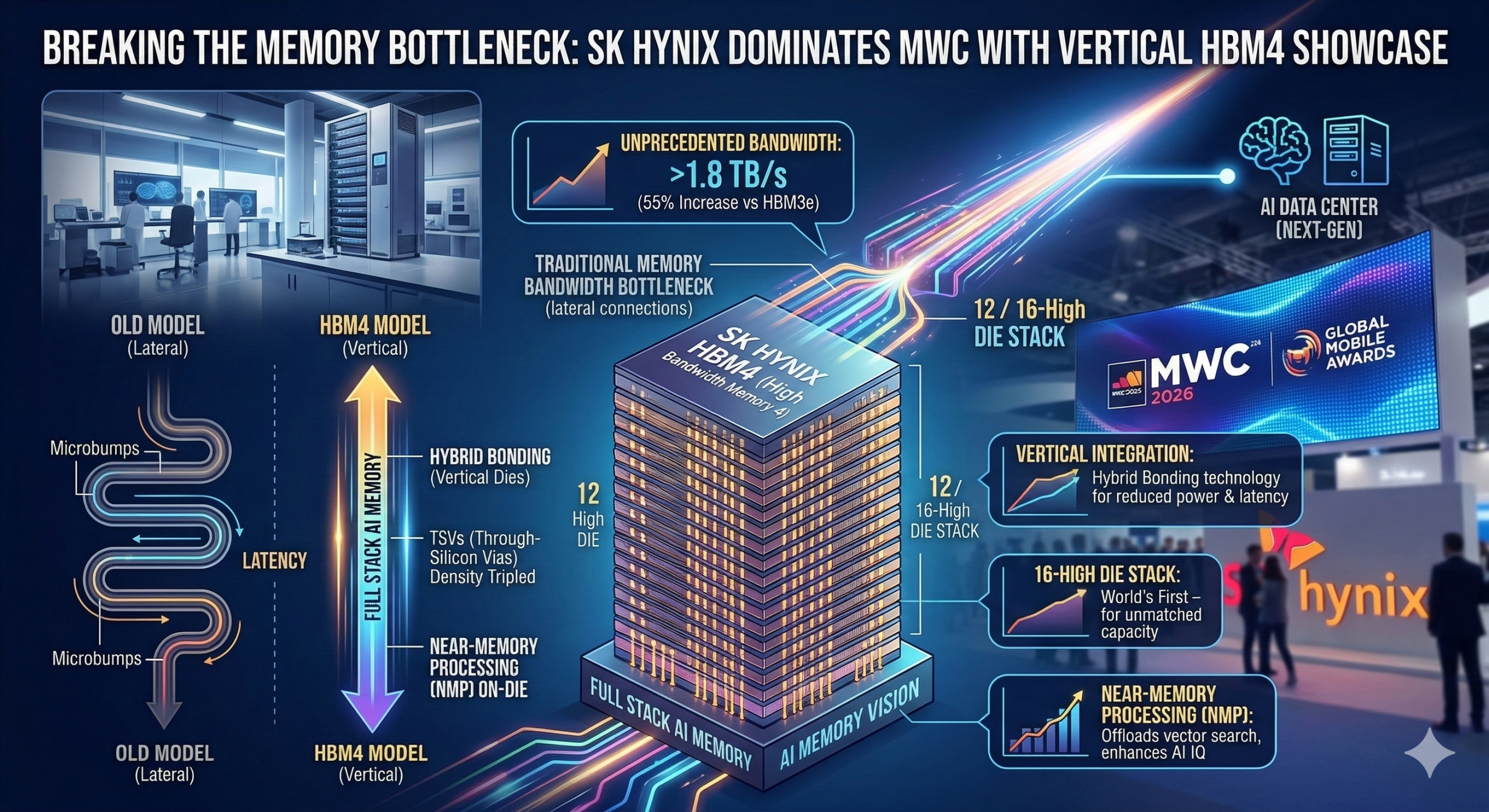

BARCELONA, Spain — March 5, 2026 — Mobile World Congress (MWC), typically the playground of consumer hardware, was dominated this morning by fundamental infrastructure. Leading the charge, South Korea’s SK hynix officially lifted the veil on HBM4 (High Bandwidth Memory 4), declaring its vision of “Full Stack AI Memory.”

This release marks a massive technological leap designed to overcome the critical “memory bottleneck” that has begun to throttle the growth of massive Artificial Intelligence (AI) data centers. By moving away from purely lateral chip connections to advanced vertical integration, SK hynix claims HBM4 is the essential foundation for the next generation of AI computation.

Moving Vertical: The HBM4 Architecture

Traditional memory architecture moves data relatively slowly over long copper wires. In contrast, HBM (High Bandwidth Memory) stacks memory dies vertically on top of an AI processor. HBM4 accelerates this concept into new territory.

-

Advanced 3D Stacking: SK hynix confirmed that HBM4 utilizes Hybrid Bonding technology, a technique it pioneered on HBM3e. This allows multiple DRAM dies to be directly bonded vertically without traditional Microbumps. This slashes the distance data travels, dramatically reducing power consumption and latency.

-

Vertical Integration (TSV): The memory stack is connected via Through-Silicon Vias (TSVs)—thousands of microscopic vertical channels that pass directly through the memory dies. In HBM4, SK hynix has tripled the density of these TSVs compared to HBM3e, allowing an astronomical increase in concurrent data pathways.

The IQ Era Requires Massive Bandwidth

During the MWC showcase, SK hynix emphasized that the current “IQ Era” of AI agents—which shift models from reactive chat to proactive multi-agent planning—demands memory with unprecedented speed and capacity. A model cannot process a complex multi-step logical argument (like “Audit this legacy COBOL and rewrite it in Python, and verify it with a test suite”) if it cannot rapidly access and move the required tokens and memory states.

Key HBM4 Benchmarks:

-

Unprecedented Bandwidth: HBM4 aims to achieve a 55% increase in total bandwidth compared to the leading HBM3e (the 1.2 Trillion parameter standard).

-

3D Die Stacking (up to 16-High): SK hynix demonstrated a functional 12-high HBM4 stack and confirmed it is qualifying a world-first 16-high stack, enabling massive capacity within the same physical footprint.

-

On-Die Computation (NMP): HBM4 integrates early forms of Near-Memory Processing (NMP). Simple logical functions can be performed directly on the memory stack before the data is passed to the main processor, dramatically offloading computation for vector-based operations like semantic search.

“We are moving from building memory components to building memory systems,” an SK hynix executive stated. “HBM4 is not just memory; it is the cognitive memory layer that enables true IQ in AI agents.”

Market Implications: Securing the AI Core

The HBM4 rollout solidifies SK hynix’s dominance in the AI memory market, crucial as partners like NVIDIA and Microsoft aggressively scale their next-gen AI supercomputers. Microsoft’s Maia 200 chip and Google’s TPU v6 are both reportedly qualifying SK hynix HBM4 for their production roadmaps.

The SK hynix announcement put immense pressure on its competitors, specifically Samsung Electronics, which struggled during HBM3 qualification, and Micron. Following the HBM4 news, SK hynix shares closed up 4.1% on the Korea Exchange, while Samsung’s closed down 1.2%.

SK hynix: Memory Roadmap 2026-2027

| Metric | HBM3e (Current Gen) | HBM4 (Announced today) | HBM4e (Concept) |

| Max Bandwidth | ~1.2 TB/s | >1.8 TB/s | >2.4 TB/s |

| Die Stacking | 12-High | 12 / 16-High | 16-High |

| I/O Technology | Microbumps | Hybrid Bonding | Hybrid Bonding (Gen 2) |

700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 774 775 776 777 778 779 780 781 782 783 784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 801 802 803 804 805 806 807 808 809 810 811 812 813 814 815 816 817 818 819 820 821 822