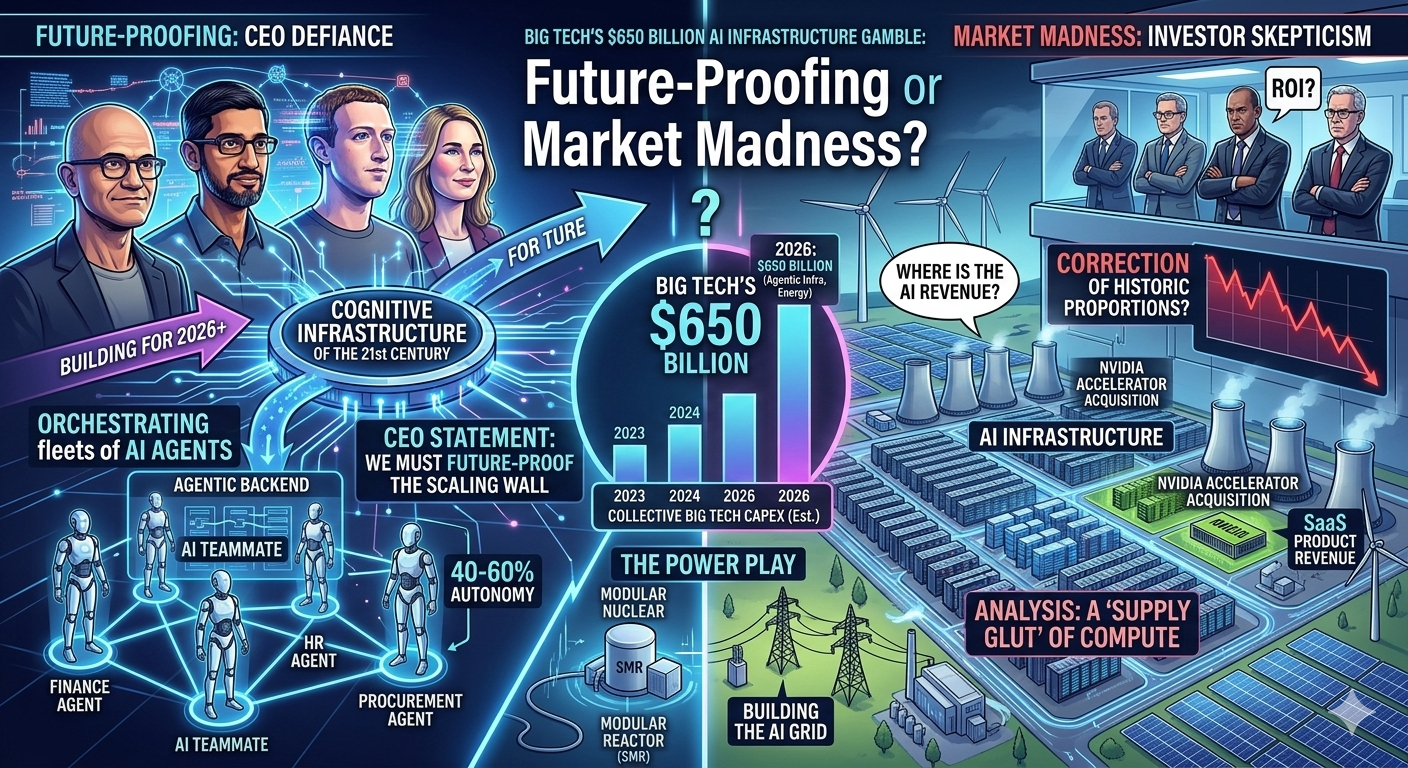

SILICON VALLEY — February 28, 2026 — In a move that simultaneously electrified and terrified global financial markets, the “Big Tech Four”—Alphabet (Google), Amazon, Meta, and Microsoft—unveiled their collective 2026 capital spending blueprints this week, allocating a unprecedented $650 billion to artificial intelligence infrastructure.

This staggering figure—a more than 40% jump from the already astronomical 2025 expenditures—represents the largest coordinated technology investment in human history. The funds are earmarked for the acquisition of millions of advanced NVIDIA accelerators, the construction of massive specialized data centers, and the energy infrastructure required to power them.

But the announcements did not spark a universal rally. Instead, they highlighted a widening chasm between Big Tech CEOs, who argue that under-investing in AI is an existential risk, and increasingly skittish institutional investors demanding to see immediate Return on Investment (ROI).

The $650 Billion Breakdown: Building the AI Superhighway

The unprecedented spending is not a monolith; each giant is pursuing a subtly different flavor of the agentic economy, as described earlier this month.

-

Microsoft and Google (The Cloud Titans): More than half the total spend (approx. $360 billion) comes from these two companies. Their primary directive is to defend and expand their Cloud footprints. As corporations shift from “Copilots” to autonomous multi-agent systems, the compute demand is not just growing; it is exploding. A major portion of their budget is focused on powering the “agentic backend” for major partners and clients (like Apple’s Gemini integration).

-

Amazon (The Efficiency Engine): Amazon’s projected $160 billion allocation focuses heavily on vertical integration. This includes its own AI accelerators (Inferentia 3 and Trainium 3) to reduce reliance on NVIDIA, as well as a massive, automated data center construction push that targets energy grid modernization near its fulfillment centers.

-

Meta (The “Llama” Open-Source Bet): Mark Zuckerberg’s company is sticking to its unconventional playbook, dedicating $130 billion to building infrastructure to support its massive open-source models, Llama 4 and 5. Meta’s philosophy remains: by building the default AI model architecture (which others fine-tune, particularly in China), Meta dictates the software ecosystem, even if it has to spend hundreds of billions to maintain that control.

The Investor Backlash: Show Me the Monetization

For the last three years, Wall Street has given Big Tech a remarkably long leash on AI spending. That patience is running thin.

Immediately following the announcements, stock performance was mixed. While all four companies reported healthy overall revenue growth, investor questions during earnings calls were singularly focused: “Where is the AI revenue?”

“We are seeing capex that resembles an Apollo space program, but the current revenue from generative AI is more like a moderately successful SaaS product,” said Brent Thill, a Senior Tech Analyst at Jefferies. “If we do not see a material acceleration in AI-driven top-line revenue—meaning 20%+ of total revenue—by the end of 2026, we are looking at a correction of historic proportions.”

The core concern is that Big Tech is building a 12-lane highway for “agentic traffic” when only a two-lane road’s worth of traffic exists today. Investors fear a “supply glut” of compute that will depress cloud margins and destroy the current scarcity premium of GPUs.

The CEO Defiance: The “Scaling Wall” Requires Scaling Spend

Big Tech CEOs were uniformly defiant, defending the spending as necessary not only for current demand but to overcome the technical hurdles that emerged this year, particularly the “Scaling Wall” for training data.

“The greatest risk isn’t spending too much,” Satya Nadella, CEO of Microsoft, told analysts. “The greatest risk is being caught without the infrastructure when the next major algorithmic breakthrough arrives. We are not just building for 2026; we are future-proofing the cognitive infrastructure of the 21st century.”

Their argument is that the shift from “Copilots” to Multi-Agent Systems (MAS) requires a fundamental re-architecture of cloud computing. This new agentic work style demands persistent, high-uptime, high-bandwidth compute that traditional cloud systems cannot provide. The $650 billion investment is the down payment required to make that shift a reality.

The Nuclear Power Play

The infrastructure problem is no longer just about buying chips; it is about powering them. AI data center power usage in 2026 is projected to match the entire energy consumption of some European nations.

In response, Big Tech is actively pivoting into energy policy. A significant, but undisclosed, percentage of the $650 billion will be spent on securing stable, long-term power sources, including massive direct investments in:

-

Small Modular Nuclear Reactors (SMRs): Both Microsoft and Amazon are reportedly in late-stage talks with nuclear startups to build micro-reactors directly on-site at new data centers.

-

Grid Modernization: To bypass aging utility grids, Big Tech is increasingly trying to build “private power lines” from remote renewable energy projects (wind/solar) to their data center campuses.

700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 774 775 776 777 778 779 780 781 782 783 784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 801 802 803 804 805 806 807 808 809 810 811 812 813 814 815 816 817 818 819 820 821 822

Key Data Points: The Capital Spending Explosion

| Year | Collective Big Tech Capex (Est.) | YoY % Increase | Major Focus |

| 2023 | $160 Billion | — | Post-Training, Early Chatbots |

| 2024 | $290 Billion | 81% | Model Training Datasets, H100 Acquisition |

| 2025 | $460 Billion | 58% | Data Center Power, Custom Accelerators |

| 2026 | $650 Billion | 41% | Agentic Infrastructure, SMRs/Energy Grid |